Kimi K2.5: How Agent Swarm and Native Multimodality Change Open-Source AI

Moonshot AI's Kimi K2.5 represents a fundamental shift in open-source AI capabilities. Unlike traditional model releases that incrementally improve benchmarks, K2.5 introduces two architectural breakthroughs: native multimodality and self-directed agent swarms. These aren't just features—they're a reimagining of how AI models should reason, see, and act in production environments.

What Makes Kimi K2.5 Different?

Most "multimodal" models in 2026 are essentially text models with vision duct-taped to the side. They take an image, convert it to text-like tokens through a separate encoder, and lose spatial reasoning in the process. Kimi K2.5 is different—it's natively multimodal, trained on approximately 15 trillion mixed visual and text tokens from the ground up. This means it maintains spatial relationships, understands visual context, and can reason across screenshots, diagrams, documents, and videos without sacrificing language performance.

But the real game-changer is Agent Swarm. While other models require manual orchestration frameworks to coordinate multiple agents, K2.5 can automatically spawn and coordinate up to 100 sub-agents, executing up to 1,500 tool calls in parallel. This reduces execution time by up to 4.5x compared to single-agent setups—and it happens without any predefined subagents or workflow templates.

Native Multimodality: Beyond Vision-Encoding Add-ons

The difference between native multimodality and encoder-based approaches becomes clear in practical coding scenarios. When you show K2.5 a screenshot of a web interface and ask it to replicate the layout:

- Encoder-based models convert the image to text descriptions, losing precise positioning

- Kimi K2.5 maintains pixel-level spatial awareness while writing CSS

This native understanding extends to video as well. K2.5 can analyze gameplay footage, identify UI patterns across multiple frames, and generate responsive code that adapts to different screen sizes—all while understanding the temporal flow of the video itself.

Benchmarks That Matter

On the MMMU Pro visual reasoning benchmark, K2.5 scores 78.5%, putting it in line with closed-source giants like GPT-5.2 (79.5%) and Claude Opus 4.5. On video understanding (VideoMMMU), it achieves 86.6%, surpassing both GPT-5.2 and Claude Opus 4.5.

But the most impressive numbers come from agentic tasks:

- BrowseComp: 74.9% (agentic web browsing)

- SWE-bench Verified: 76.8% (real-world GitHub issues)

- HLE Full (with tools): 50.2% (Humanity's Last Exam)

These aren't just incremental improvements—they're state-of-the-art results for an open-weights model.

Agent Swarm: Parallel Execution Without the Boilerplate

Traditional agent frameworks require you to manually define subagent roles, craft handoff rules, and manage state synchronization. K2.5 eliminates this overhead through Parallel-Agent Reinforcement Learning (PARL)—a training paradigm where the model learns how to break down tasks and coordinate parallel execution on its own.

How It Works in Practice

When you give K2.5 a complex task like "build a full-stack dashboard with authentication, data visualization, and real-time updates," it might:

- Spawn 20+ sub-agents in parallel

- Assign specialized roles (frontend, backend, database, API integration)

- Have sub-agents communicate and coordinate without central orchestration

- Merge results into a cohesive codebase

All of this happens automatically. You don't write YAML configs, you don't define agent hierarchies, and you don't manage message passing. The model figures it out.

Performance Gains

Moonshot AI's internal testing shows:

- 4.5x faster execution on multi-step workflows

- 60-100 tokens/second throughput in Turbo mode

- 78.4% BrowseComp score in swarm mode (vs. 57.5% in single-agent mode)

These aren't synthetic benchmarks—they're real-world performance gains on tasks like web scraping, API integration, and automated testing.

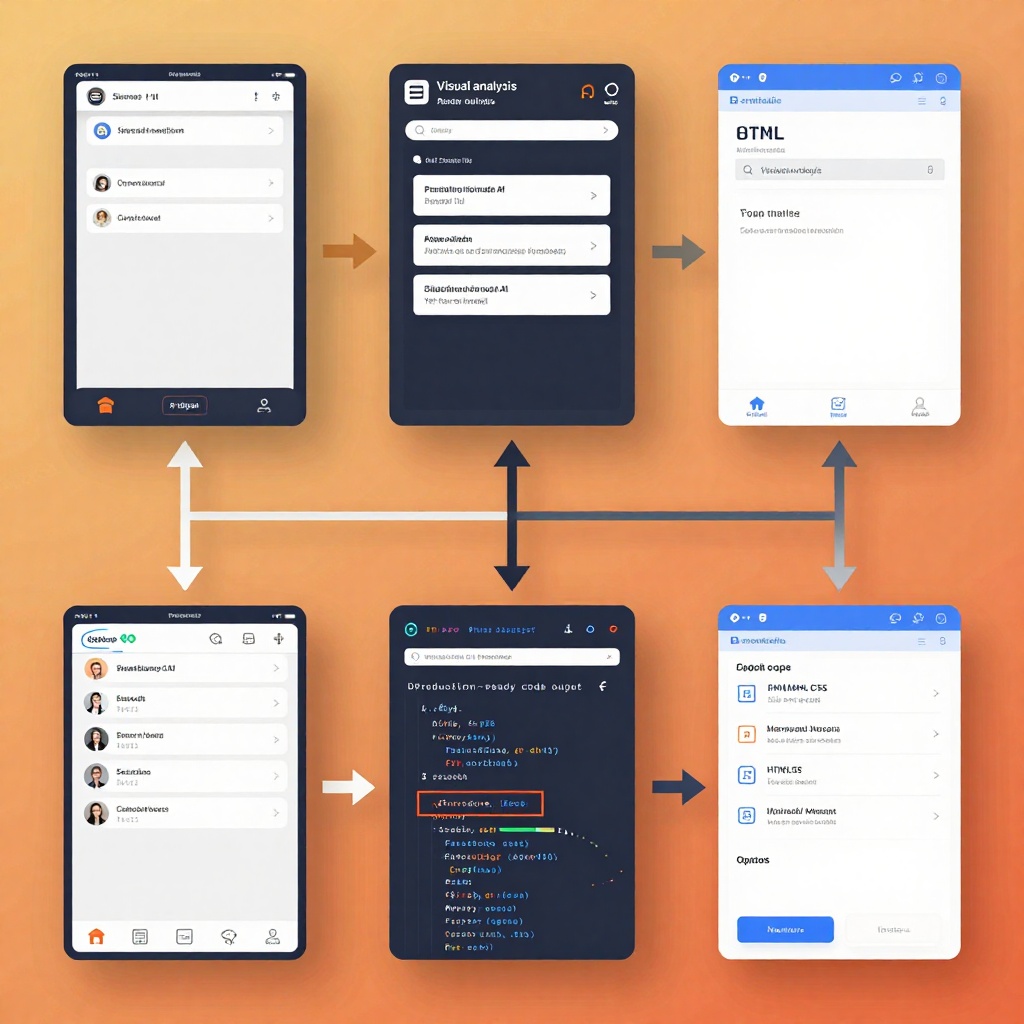

Visual Coding: From Screenshot to Production

K2.5's combination of native vision and agent swarm makes it particularly powerful for frontend development. You can:

- Upload a mockup → Get responsive HTML/CSS/JS

- Show a video walkthrough → Get full feature implementation

- Share a screenshot of a bug → Get automated debugging with visual inspection

The model can see rendered output, identify UI issues, and fix them without you ever describing the problem in text. According to the official Kimi K2.5 model card, this visual debugging capability is built into the core architecture—not bolted on as an afterthought.

Real-World Performance vs. Closed Source

The question everyone asks: Is it actually better than GPT-5.2 or Claude Opus 4.5?

The answer depends on your use case:

| Benchmark | Kimi K2.5 | GPT-5.2 | Claude Opus 4.5 |

|---|---|---|---|

| HLE (w/ tools) | 50.2% | 45.5% | 43.2% |

| BrowseComp | 74.9% | ~60%* | ~65%* |

| MMMU Pro | 78.5% | 79.5% | 74.0% |

| SWE-bench | 76.8% | 80.0 | 80.9% |

*Estimated from available data

K2.5 excels at agentic tasks—anything involving tool use, web browsing, or multi-step reasoning. It matches or exceeds closed-source models on vision benchmarks. It slightly trails on pure coding without tools (SWE-bench), but the gap narrows significantly when you factor in its visual capabilities.

How to Use Kimi K2.5

Option 1: Hosted APIs

Multiple platforms offer day-1 support:

- Fireworks AI: Up to 200 tokens/second, fastest inference

- Together AI: Optimized for long-context applications

- NVIDIA Build: Enterprise deployment with GPU acceleration

- OpenRouter: Unified API across multiple providers

Option 2: Local Deployment

With 1 trillion parameters (MoE architecture, ~32B activated), running K2.5 locally requires significant hardware. Successful local deployments have been demonstrated on:

- Dual M3 Ultra Mac Studios with 512GB RAM each (using MLX)

- Enterprise GPU clusters with A100/H100 cards

Option 3: Kimi Code (Official Tool)

Moonshot AI offers Kimi Code, an open-source coding agent that rivals Claude Code and Gemini CLI. It supports:

- Terminal integration

- IDE plugins (VS Code, Cursor, Zed)

- Image and video inputs for visual debugging

The Bigger Picture: Open Source Is Winning

K2.5 is part of a broader trend: open-source models are now matching or exceeding closed-source alternatives. As noted in industry analysis, "the lead between frontier models is quickly collapsing versus open source options." With backing from Alibaba, Moonshot AI has the resources to compete with US-based giants like OpenAI and Anthropic.

But what makes K2.5 significant isn't just its benchmarks—it's the architectural innovations that open-source models can now build on:

- Native multimodality as a baseline, not an add-on

- Self-directed agent swarms without manual orchestration

- Open-weights licensing that permits commercial use (with attribution for large-scale deployments)

These aren't just incremental improvements—they're foundational capabilities that the entire open-source community can build upon.

Limitations and Considerations

No model is perfect, and K2.5 has trade-offs:

- Hardware requirements: Full performance requires significant compute resources

- Quantization impact: INT4 quantization improves speed but may reduce edge-case performance

- Beta features: Agent Swarm is still in beta, with potential for refinement

- Licensing: Commercial use requires attribution for products with >100M users or >$20M monthly revenue

Conclusion: A New Paradigm for Open-Source AI

Kimi K2.5 represents a meaningful shift in how we think about open-source AI. By treating agentic intelligence, parallel execution, and multimodal reasoning as first-class capabilities rather than add-ons, it moves beyond static model behavior toward real-world execution.

For developers, researchers, and enterprises, the question is no longer "can open source match closed source?"—it's "how quickly can we build on these new capabilities?"

The Agent Swarm paradigm alone makes K2.5 worth watching. If Moonshot AI can refine the beta implementation and expand beyond the current 100-sub-agent limit, we could see a fundamental rethinking of how AI systems are architected—not just as single models, but as coordinated swarms that can tackle problems of unprecedented complexity.

The era of open-source AI playing catch-up is over. With Kimi K2.5, the open-source community is setting the direction for where agentic AI goes next.

Want to try Kimi K2.5? Check out the official documentation on Moonshot AI's platform or access it via Fireworks AI for the fastest inference speeds.

Not sure if K2.5 is right for your use case? Read our comparative analysis to see when Kimi K2.5 outperforms GPT-5.2 and Claude Opus 4.5, and when you might want to stick with the closed-source alternatives.

Ready to start coding? Check out our practical developer's guide for hands-on tutorials, code examples, and production deployment strategies.